Efficient Audio Enhancement with a Differentiable Psychoacoustic Loss

2LCTI, Télécom Paris, IP Paris, France

Abstract

Audio enhancement consists of improving the perceived quality of audio signals. Initially, with the aim of addressing bandwidth extension, this work proposes $\textrm{AEROMamba}_{\textrm{P}}$, an efficient variant of the $\textrm{AERO}$ super-resolution architecture where attention and LSTM layers are replaced by the Mamba state-space model, and which incorporates a newly developed differentiable perceptual loss derived from the Perceptual Audio Quality Measure (PAQM). During training, the architecture requires approximately 2–4x less GPU memory than the baseline; during inference, it achieves a 14x speedup while using only one-fifth of the GPU memory. When upsampling both a piano dataset and MUSDB18 from 11.025 kHz to 44.1 kHz, subjective listening tests show that $\textrm{AEROMamba}_{\textrm{P}}$ outperforms $\textrm{AERO}$ by 15% in perceived quality scores. Next, to handle the enhancement of audio signals that have been highly compressed by lossy coding, it is further proposed $\textrm{AEROMamba}_{\textrm{P} \bar{\textrm{S}}}$, which applies the same framework but replaces STFT reconstruction losses with the PAQM loss, specifically to enhance MP3 encoded audio at 32 kbps. In listening evaluations, $\textrm{AEROMamba}_{\textrm{P} \bar{\textrm{S}}}$ achieves 52% higher quality rating than $\textrm{AEROMamba}_{\textrm{P}}$ when restoring compressed audio. These results demonstrate that PAQM-driven training coupled with lightweight state-space modeling yields high perceptual quality and computational efficiency in both band-limited and compressed audio scenarios.

Results: Super-resolution of Bandlimited Audio

Results for the MUSDB and PianoEval datasets comparing ViSQOL, LSD, and subjective scores, as well as performance metrics on a NVIDIA RTX 3090 GPU for 10-second samples.

MUSDB Results

| Model | ViSQOL ↑ | LSD ↓ | Score ↑ |

|---|---|---|---|

| $\textrm{Low-Resolution}$ | 1.82 | 3.98 | 38.22 |

| $\textrm{AERO}$ | 2.90 | 1.34 | 60.03 |

| $\textrm{AEROMamba}$ | 2.93 | 1.23 | 66.47 |

| $\textrm{AEROMamba}_{\textrm{P}}$ | 3.04 | 1.19 | 79.26 |

| $\textrm{AudioSR}$ | 3.01 | - | - |

PianoEval Results

| Model | ViSQOL ↑ | LSD ↓ | Score ↑ |

|---|---|---|---|

| $\textrm{Low-Resolution}$ | 4.36 | 1.09 | 72.92 |

| $\textrm{AERO}$ | 4.38 | 0.99 | 76.89 |

| $\textrm{AEROMamba}-{\textrm{HQ}}$ | 4.38 | 1.00 | 84.41 |

| $\textrm{AEROMamba}_{\textrm{P}}-\textrm{HQ}$ | 4.41 | 0.90 | 78.76 |

Models labeled with $\textrm{-HQ}$ were trained on PianoEval-HQ.

Performance Comparison (NVIDIA RTX 3090)

| Method | GPU Usage (MB) | Time (s) | Parameters |

|---|---|---|---|

| $\textrm{AERO}$ | 17091 | 1.246 | 19,432,958 |

| $\textrm{AEROMamba}$ | 3000 | 0.087 | 20,964,190 |

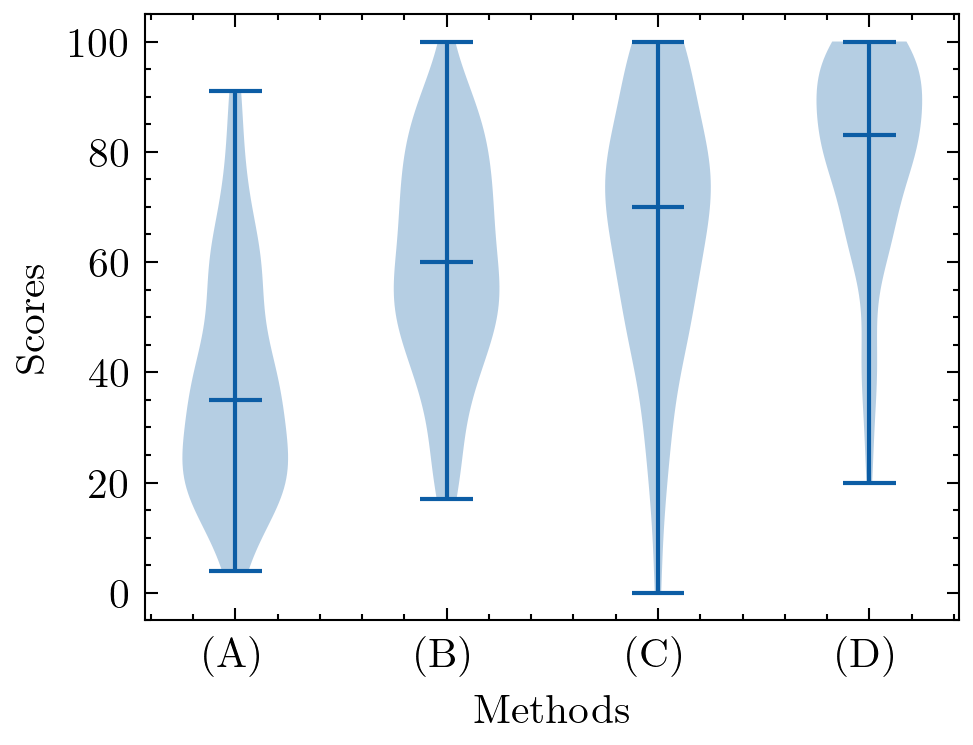

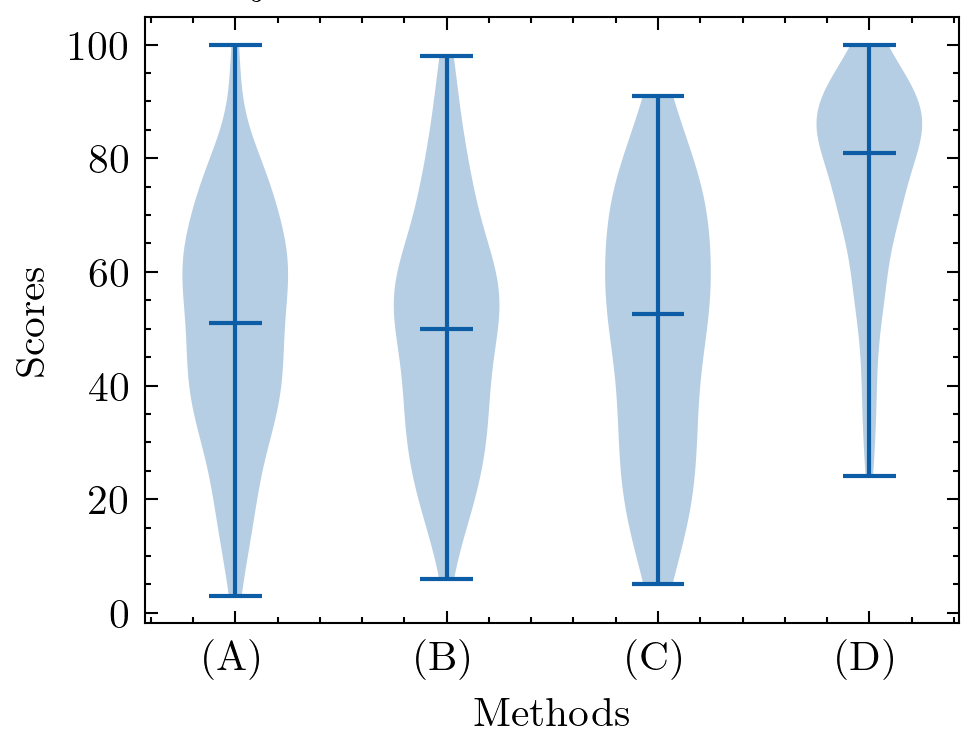

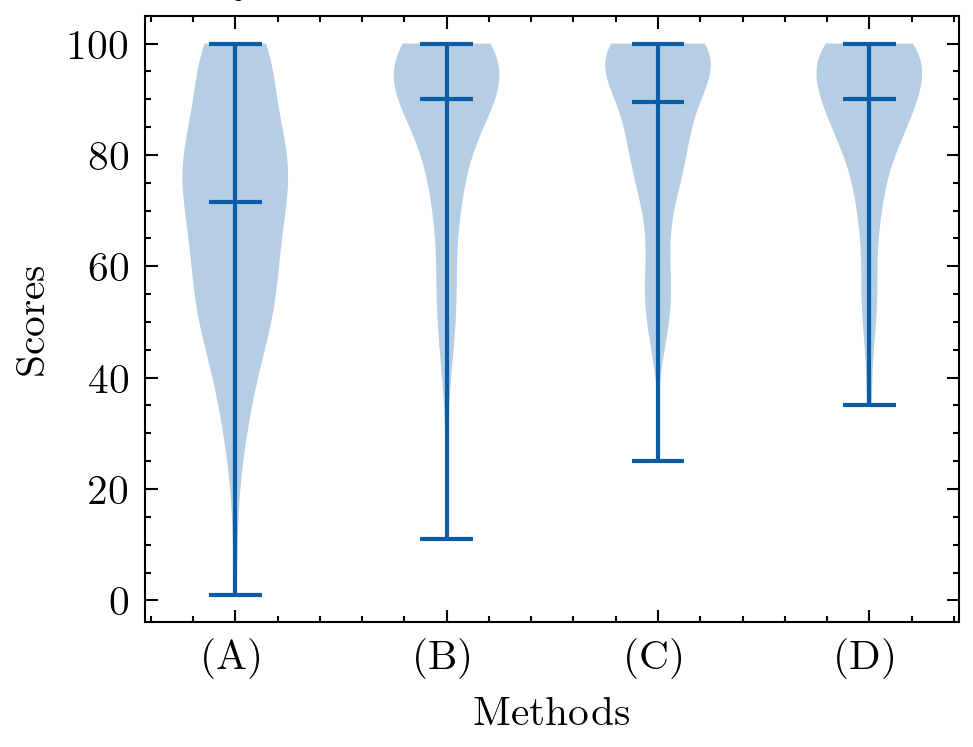

Subjective Score Distributions

MUSDB

PianoEval

Statistical Tests (Mann-Whitney U)

Pairwise comparisons of subjective scores (p-values). Values < 0.05 are considered statistically significant.

MUSDB (Subjective)

| Comparison | p-value |

|---|---|

| $\textrm{Low-Resolution}$ vs. $\textrm{AEROMamba}$ | < 0.0001 |

| $\textrm{Low-Resolution}$ vs. $\textrm{AEROMamba}_{\textrm{P}}$ | < 0.0001 |

| $\textrm{Low-Resolution}$ vs. $\textrm{AERO}$ | < 0.0001 |

| $\textrm{AEROMamba}$ vs. $\textrm{AEROMamba}_{\textrm{P}}$ | < 0.0001 |

| $\textrm{AEROMamba}$ vs. $\textrm{AERO}$ | 0.0089 |

| $\textrm{AEROMamba}_{\textrm{P}}$ vs. $\textrm{AERO}$ | < 0.0001 |

PianoEval (Subjective)

| Comparison | p-value |

|---|---|

| $\textrm{Low-Resolution}$ vs. $\textrm{AEROMamba}-{\textrm{HQ}}$ | < 0.0001 |

| $\textrm{Low-Resolution}$ vs. $\textrm{AEROMamba}_{\textrm{P}}-\textrm{HQ}$ | 0.0587 |

| $\textrm{Low-Resolution}$ vs. $\textrm{AERO}$ | 0.3399 |

| $\textrm{AEROMamba}-{\textrm{HQ}}$ vs. $\textrm{AEROMamba}_{\textrm{P}}-\textrm{HQ}$ | 0.0101 |

| $\textrm{AEROMamba}-{\textrm{HQ}}$ vs. $\textrm{AERO}$ | 0.0003 |

| $\textrm{AEROMamba}_{\textrm{P}}-\textrm{HQ}$ vs. $\textrm{AERO}$ | 0.2975 |

Pairwise comparisons of ViSQOL scores (p-values).

MUSDB (ViSQOL)

| Comparison | p-value |

|---|---|

| $\textrm{AEROMamba}$ vs. $\textrm{AEROMamba}_{\textrm{P}}$ | < 0.0001 |

| $\textrm{AEROMamba}$ vs. $\textrm{AERO}$ | 0.0007 |

| $\textrm{AEROMamba}_{\textrm{P}}$ vs. $\textrm{AERO}$ | < 0.0001 |

| $\textrm{AEROMamba}$ vs. $\textrm{Low-Resolution}$ | < 0.0001 |

| $\textrm{AEROMamba}_{\textrm{P}}$ vs. $\textrm{Low-Resolution}$ | < 0.0001 |

| $\textrm{AERO}$ vs. $\textrm{Low-Resolution}$ | < 0.0001 |

| $\textrm{AudioSR}$ vs. $\textrm{AEROMamba}_{\textrm{P}}$ | 0.2178 |

PianoEval (ViSQOL)

| Comparison | p-value |

|---|---|

| $\textrm{Low-Resolution}$ vs. $\textrm{AERO}$ | < 0.0001 |

| $\textrm{Low-Resolution}$ vs. $\textrm{AERO-HQ}$ | < 0.0001 |

| $\textrm{Low-Resolution}$ vs. $\textrm{AEROMamba}$ | 0.2989 |

| $\textrm{Low-Resolution}$ vs. $\textrm{AEROMamba}-{\textrm{HQ}}$ | < 0.0001 |

| $\textrm{Low-Resolution}$ vs. $\textrm{AEROMamba}_{\textrm{P}}$ | < 0.0001 |

| $\textrm{Low-Resolution}$ vs. $\textrm{AEROMamba}_{\textrm{P}}-\textrm{HQ}$ | < 0.0001 |

| $\textrm{Low-Resolution}$ vs. $\textrm{AudioSR}$ | < 0.0001 |

| $\textrm{AERO}$ vs. $\textrm{AERO-HQ}$ | 0.1077 |

| $\textrm{AERO}$ vs. $\textrm{AEROMamba}$ | < 0.0001 |

| $\textrm{AERO}$ vs. $\textrm{AEROMamba}-{\textrm{HQ}}$ | 0.0215 |

| $\textrm{AERO}$ vs. $\textrm{AEROMamba}_{\textrm{P}}$ | 0.6028 |

| $\textrm{AERO}$ vs. $\textrm{AEROMamba}_{\textrm{P}}-\textrm{HQ}$ | 0.6917 |

| $\textrm{AERO}$ vs. $\textrm{AudioSR}$ | < 0.0001 |

| $\textrm{AERO-HQ}$ vs. $\textrm{AEROMamba}$ | < 0.0001 |

| $\textrm{AERO-HQ}$ vs. $\textrm{AEROMamba}-{\textrm{HQ}}$ | 0.0002 |

| $\textrm{AERO-HQ}$ vs. $\textrm{AEROMamba}_{\textrm{P}}$ | 0.2574 |

| $\textrm{AERO-HQ}$ vs. $\textrm{AEROMamba}_{\textrm{P}}-\textrm{HQ}$ | 0.2083 |

| $\textrm{AERO-HQ}$ vs. $\textrm{AudioSR}$ | < 0.0001 |

| $\textrm{AEROMamba}$ vs. $\textrm{AEROMamba}-{\textrm{HQ}}$ | < 0.0001 |

| $\textrm{AEROMamba}$ vs. $\textrm{AEROMamba}_{\textrm{P}}$ | < 0.0001 |

| $\textrm{AEROMamba}$ vs. $\textrm{AEROMamba}_{\textrm{P}}-\textrm{HQ}$ | < 0.0001 |

| $\textrm{AEROMamba}$ vs. $\textrm{AudioSR}$ | < 0.0001 |

| $\textrm{AEROMamba}-{\textrm{HQ}}$ vs. $\textrm{AEROMamba}_{\textrm{P}}$ | 0.1172 |

| $\textrm{AEROMamba}-{\textrm{HQ}}$ vs. $\textrm{AEROMamba}_{\textrm{P}}-\textrm{HQ}$ | 0.0806 |

| $\textrm{AEROMamba}-{\textrm{HQ}}$ vs. $\textrm{AudioSR}$ | < 0.0001 |

| $\textrm{AEROMamba}_{\textrm{P}}$ vs. $\textrm{AEROMamba}_{\textrm{P}}-\textrm{HQ}$ | 0.8168 |

| $\textrm{AEROMamba}_{\textrm{P}}$ vs. $\textrm{AudioSR}$ | < 0.0001 |

| $\textrm{AEROMamba}_{\textrm{P}}-\textrm{HQ}$ vs. $\textrm{AudioSR}$ | < 0.0001 |

Audio Examples: MUSDB

Tracks upsampled from 11.025kHz to 44.1kHz

| Track | Original ($\textrm{Low-Res}$) 11.025 kHz |

Original ($\textrm{High-Res}$) 44.1 kHz |

$\textrm{AERO}$ 11.025 → 44.1 kHz |

$\textrm{AEROMamba}$ 11.025 → 44.1 kHz |

$\textrm{AEROMamba}_{\textrm{P}}$ 11.025 → 44.1 kHz |

|---|---|---|---|---|---|

| 459 | |||||

| 480 | |||||

| 826 | |||||

| 625 |

Results: Enhancement of Heavily Compressed Audio

Objective and subjective scores for low-bitrate (MP3 32kbps) signals and various models evaluated on MUSDB and PianoEval.

MUSDB Results

| System | ViSQOL ↑ | LSD ↓ | Score ↑ |

|---|---|---|---|

| $\textrm{Low-Bitrate}$ | 1.80 | 2.02 | 50.7 |

| $\textrm{AEROMamba}$ | 2.45 | 1.24 | 49.8 |

| $\textrm{AEROMamba}_{\textrm{P}}$ | 2.99 | 1.27 | 49.7 |

| $\textrm{AEROMamba}_{\textrm{P} \bar{\textrm{S}}}$ | 2.90 | 1.23 | 75.6 |

PianoEval Results

| System | ViSQOL ↑ | LSD ↓ | Score ↑ |

|---|---|---|---|

| $\textrm{Low-Bitrate}$ | 4.35 | 2.33 | 69.5 |

| $\textrm{AEROMamba}$ | 4.22 | 1.14 | 83.4 |

| $\textrm{AEROMamba}_{\textrm{P}}$ | 4.24 | 1.12 | 84.1 |

| $\textrm{AEROMamba}_{\textrm{P} \bar{\textrm{S}}}$ | 4.41 | 1.13 | 85.5 |

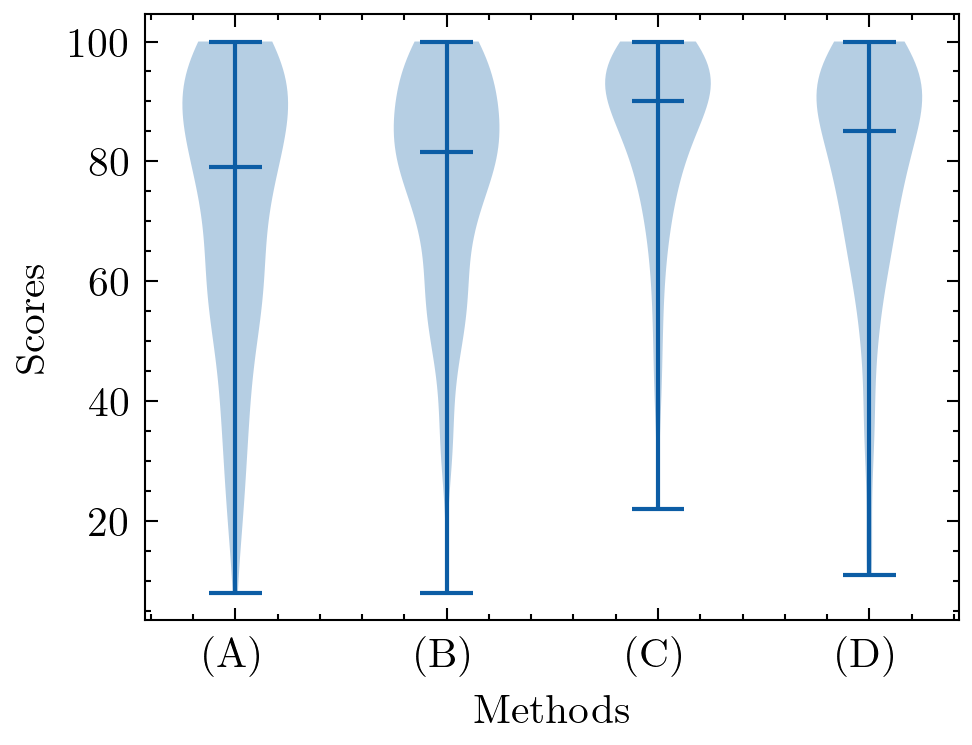

Subjective Score Distributions

MUSDB

PianoEval

Statistical Tests (Mann-Whitney U)

Pairwise comparisons of subjective scores (p-values). Values < 0.05 are considered statistically significant.

MUSDB (Subjective)

| Comparison | p-value |

|---|---|

| $\textrm{Low-Bitrate}$ vs. $\textrm{AEROMamba}$ | 0.7390 |

| $\textrm{Low-Bitrate}$ vs. $\textrm{AEROMamba}_{\textrm{P}}$ | 0.8233 |

| $\textrm{Low-Bitrate}$ vs. $\textrm{AEROMamba}_{\textrm{P} \bar{\textrm{S}}}$ | < 0.0001 |

| $\textrm{AEROMamba}$ vs. $\textrm{AEROMamba}_{\textrm{P}}$ | 0.8751 |

| $\textrm{AEROMamba}$ vs. $\textrm{AEROMamba}_{\textrm{P} \bar{\textrm{S}}}$ | < 0.0001 |

| $\textrm{AEROMamba}_{\textrm{P}}$ vs. $\textrm{AEROMamba}_{\textrm{P} \bar{\textrm{S}}}$ | < 0.0001 |

PianoEval (Subjective)

| Comparison | p-value |

|---|---|

| $\textrm{Low-Bitrate}$ vs. $\textrm{AEROMamba}$ | < 0.0001 |

| $\textrm{Low-Bitrate}$ vs. $\textrm{AEROMamba}_{\textrm{P}}$ | < 0.0001 |

| $\textrm{Low-Bitrate}$ vs. $\textrm{AEROMamba}_{\textrm{P} \bar{\textrm{S}}}$ | < 0.0001 |

| $\textrm{AEROMamba}$ vs. $\textrm{AEROMamba}_{\textrm{P}}$ | 0.9193 |

| $\textrm{AEROMamba}$ vs. $\textrm{AEROMamba}_{\textrm{P} \bar{\textrm{S}}}$ | 0.7168 |

| $\textrm{AEROMamba}_{\textrm{P}}$ vs. $\textrm{AEROMamba}_{\textrm{P} \bar{\textrm{S}}}$ | 0.7034 |

Pairwise comparisons of ViSQOL scores (p-values).

MUSDB (ViSQOL)

| Comparison | p-value |

|---|---|

| $\textrm{Low-Bitrate}$ vs. $\textrm{AEROMamba}$ | < 0.0001 |

| $\textrm{Low-Bitrate}$ vs. $\textrm{AEROMamba}_{\textrm{P}}$ | < 0.0001 |

| $\textrm{Low-Bitrate}$ vs. $\textrm{AEROMamba}_{\textrm{P} \bar{\textrm{S}}}$ | < 0.0001 |

| $\textrm{AEROMamba}$ vs. $\textrm{AEROMamba}_{\textrm{P}}$ | < 0.0001 |

| $\textrm{AEROMamba}$ vs. $\textrm{AEROMamba}_{\textrm{P} \bar{\textrm{S}}}$ | < 0.0001 |

| $\textrm{AEROMamba}_{\textrm{P}}$ vs. $\textrm{AEROMamba}_{\textrm{P} \bar{\textrm{S}}}$ | < 0.0001 |

PianoEval (ViSQOL)

| Comparison | p-value |

|---|---|

| $\textrm{Low-Bitrate}$ vs. $\textrm{AEROMamba}$ | < 0.0001 |

| $\textrm{Low-Bitrate}$ vs. $\textrm{AEROMamba}_{\textrm{P}}$ | < 0.0001 |

| $\textrm{Low-Bitrate}$ vs. $\textrm{AEROMamba}_{\textrm{P} \bar{\textrm{S}}}$ | 0.0052 |

| $\textrm{AEROMamba}$ vs. $\textrm{AEROMamba}_{\textrm{P}}$ | 0.3122 |

| $\textrm{AEROMamba}$ vs. $\textrm{AEROMamba}_{\textrm{P} \bar{\textrm{S}}}$ | < 0.0001 |

| $\textrm{AEROMamba}_{\textrm{P}}$ vs. $\textrm{AEROMamba}_{\textrm{P} \bar{\textrm{S}}}$ | < 0.0001 |

Audio Examples: MUSDB (Compressed)

Tracks restored from 32kbps MP3 to 44.1kHz

| Track | Low-Bitrate MP3 32kbps |

High-Res 44.1 kHz |

$\textrm{AEROMamba}$ | $\textrm{AEROMamba}_{\textrm{P}}$ | $\textrm{AEROMamba}_{\textrm{P} \bar{\textrm{S}}}$ |

|---|---|---|---|---|---|

| 459 | |||||

| 480 | |||||

| 826 | |||||

| 625 |

Extra: Super-resolution of Degraded Historical Recordings

Super-resolution of Alfred Cortot's historical piano recordings. This section showcases the difference between the predictions of super-resolution of bandlimited audio models when trained with noisy or only high-quality recordings. The input tracks contain much more noise than any of the training data from both HQ and non-HQ datasets.

| Recording | Low Resolution | High Resolution | $\textrm{AEROMamba}$ | $\textrm{AEROMamba}\text{-}\textrm{HQ}$ | $\textrm{AEROMamba}_{\textrm{P}}$ | $\textrm{AEROMamba}_{\textrm{P}}\text{-}\textrm{HQ}$ |

|---|---|---|---|---|---|---|

| Cortot (1925) | ||||||

| Cortot (1942) |

PianoEval Dataset Metadata

The collected PianoEval dataset consists of two parts. The first is composed of the 24 Preludes for Piano, op. 28, by Chopin performed by 33 pianists in 45 different recordings available on CD (Compact Disc), totaling approximately 22 hours. The second part contains excerpts of Ligeti piano études, a Schumann sonata, and the Barber sonata, played by three different performers, respectively, totaling approximately 3.5 hours. Information about performers, record label and year of recording are detailed in the tables below.

Train/Validation

| Pianist | Label | Year |

|---|---|---|

| Arrau, C. | Columbia | 1950/1 |

| Arrau, C. | Philips | 1973 |

| Argerich, M. | DG | 1975 |

| Ashkenazy, V. | Decca | 1976 |

| Ashkenazy, V. | Decca | 1992 |

| Bolet, J. | RCA | 1974 |

| Blechacz, R. | DG | 2007 |

| Cherkassky, S. | ASV | 1968 |

| Cortot, A. | HMV | 1926 |

| Cortot, A. | HMV | 1933/4 |

| Cortot, A. | Gramophone | 1942 |

| Cortot, A. | Archipel | 1955 |

| Cortot, A. | EMI | 1957 |

| Davidovich, B. | Decca | 1979 |

| de Larrocha, A. | Decca | 1974 |

| Duchable, F. | Erato | 1988 |

| Dutra, G. | Yellow Tail | 1997 |

| El Bacha, A. R. | Forlane | 1999 |

| François, S. | EMI | 1959 |

| Freire, N. | Columbia | 1970 |

| Harasiewicz, A. | Philips | 1963 |

| Katsaris, C. | Sony | 1992 |

| Kissin, Y. | RCA | 1999 |

| Lima, A. M. | Caras | 1981 |

| Lucchesini, A. | EMI | 1988 |

| Magaloff, N. | Philips | 1975 |

| Novaes, G. | M&A | 1949 |

| Ohlsson, G. | EMI | 1974 |

| Ohlsson, G. | Hyperion | 1989 |

| Perahia, M. | Columbia | 1975 |

| Petri, E. | Columbia | 1942 |

| Pires, M. | Erato | 1975 |

| Pires, M. | DG | 1992 |

| Pogorelich, I. | DG | 1989 |

| Pollini, M. | DG | 1974 |

| Pollini, M. | DG | 2011 |

| Proença, M. | Delphos | 1999 |

| Rubinstein, A. | RCA | 1946 |

| Switala, W. | NIFC | 2006/7 |

| Tiempo, S. | Victor | 1990 |

| Varsi, D. | Genuin | 1988 |

Test

| Pianist | Label | Year |

|---|---|---|

| B. Glemser | Naxos | 1993 |

| D. Pollack | Naxos | 1995 |

| P. L. Aimard | Sony | 1995 |

Subjective Test Tracklist

The following table maps the Question IDs (QID) used during the subjective listening tests to the corresponding audio tracks from the MUSDB and PianoEval datasets.

| QID | Track | QID | Track |

|---|---|---|---|

| 1 | electronic01 | 13 | electronic02 |

| 2 | rock01 | 14 | rock02 |

| 3 | pop01 | 15 | pop02 |

| 4 | hiphop01 | 16 | hiphop02 |

| 5 | latin01 | 17 | reggae01 |

| 6 | other01 | 18 | other02 |

| 7 | 02Barber | 19 | 04Barber |

| 8 | 14Ligeti | 20 | 17Ligeti |

| 9 | 05Ligeti | 21 | 15Ligeti |

| 10 | 07Barber | 22 | 08Barber |

| 11 | 03Schumann | 23 | 04Schumann |

| 12 | 02Schumann | 24 | 15Schumann |